You are here

马哥 40_02 _Linux集群系列之十六——基于drbd+corosync的高可用 mysql 有大用

drbd + corosync

高可用集群的资源一定不能开机自动启动,

所以把原来的在第二个节点 192.168.0.55 上的 主节点 卸载drbd资源,并且降为从节点

在第二个节点 192.168.0.55 上

[root@node2 ~]# drbd-overview

0:mydrbd Connected Primary/Secondary UpToDate/UpToDate C r----- /mydata3 ext3 950M 18M 885M 2%

[root@node2 ~]#

[root@node2 ~]# umount /mydata3 #卸载drbd资源

[root@node2 ~]# drbdadm secondary mydrbd #降级

[root@node2 ~]# drbd-overview #确保没问题

0:mydrbd Connected Secondary/Secondary UpToDate/UpToDate C r-----

[root@node2 ~]#

在第一个节点 192.168.0.45 上

停止服务

[root@node1 ~]# service drbd stop

Stopping all DRBD resources: .

[root@node1 ~]# chkconfig --list drbd

drbd 0:关闭 1:关闭 2:启用 3:启用 4:启用 5:启用 6:关闭

[root@node1 ~]# chkconfig drbd off #确保开机不自动启动

[root@node1 ~]#

在第二个节点 192.168.0.55 上

停止服务

[root@node2 ~]# service drbd stop

Stopping all DRBD resources: .

[root@node2 ~]#

[root@node2 ~]# chkconfig --list drbd

drbd 0:关闭 1:关闭 2:启用 3:启用 4:启用 5:启用 6:关闭

[root@node2 ~]# chkconfig drbd off #确保开机不自动启动

[root@node2 ~]#

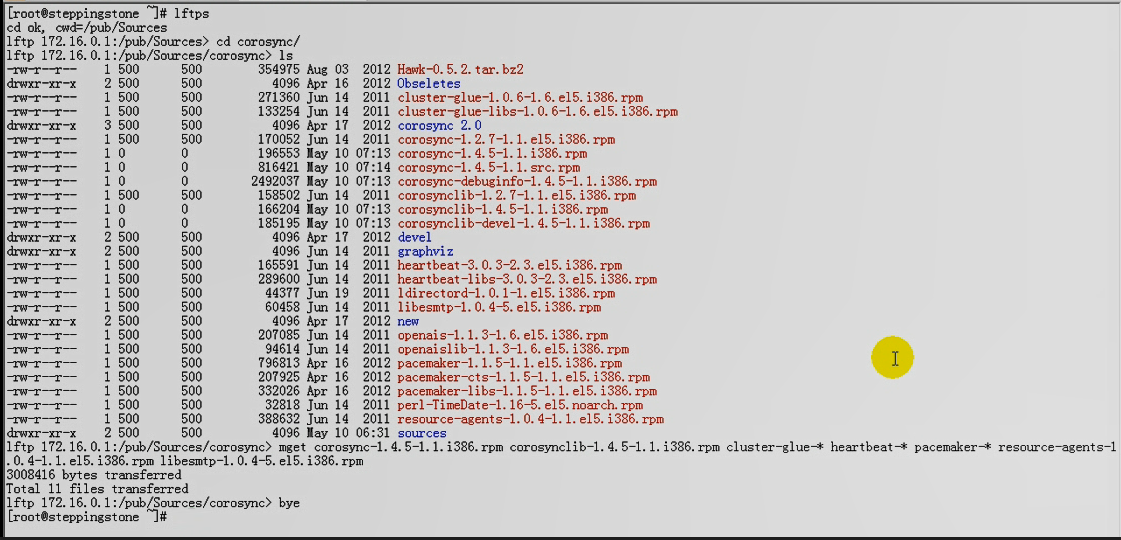

在跳转机上192.168.0.75 上给两个节点安装 corosync

(马哥现在用的是 corosync 1.4.5的版本,这是他自己亲自做的rpm包?)

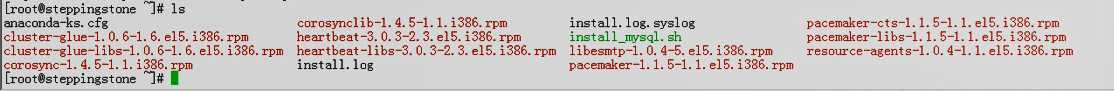

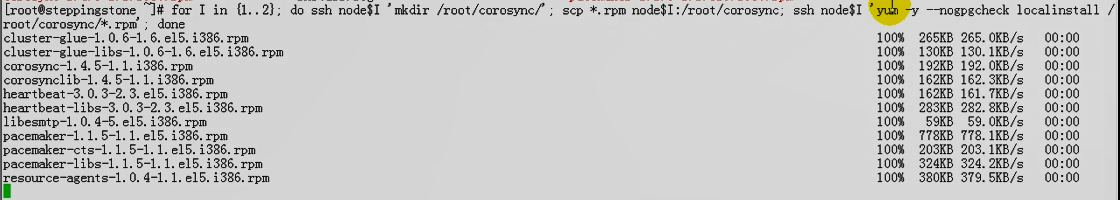

把下面 rpm 复制到每一个节点上并安装

在跳板机上安装

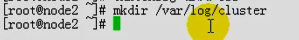

在每个高可用集群的节点上创建目录 /var/log/cluster

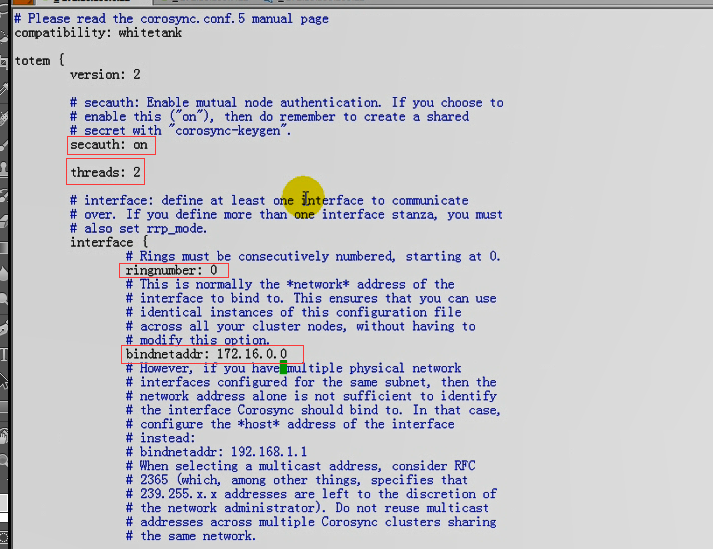

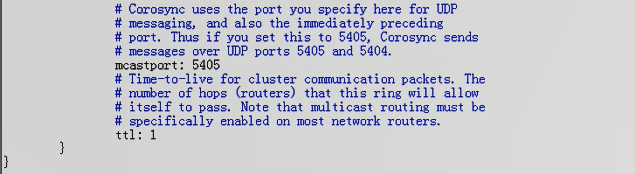

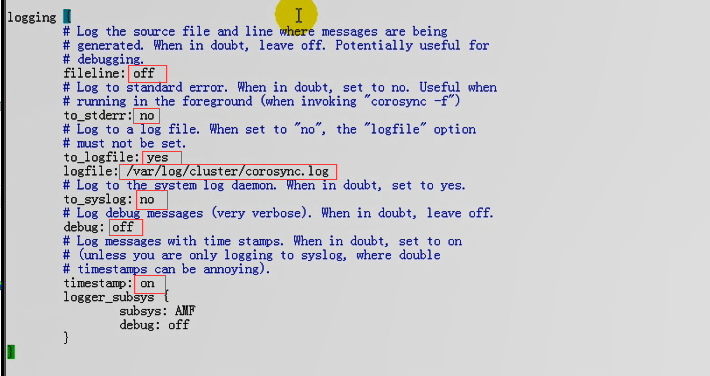

在每个节点上修改配置文件

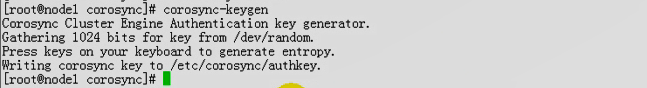

生成密钥

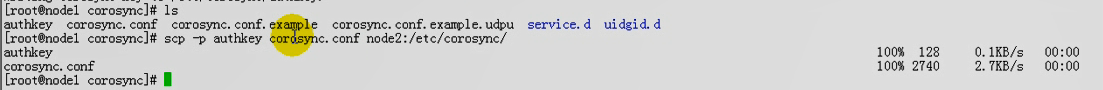

复制密钥和配置文件到另一个节点

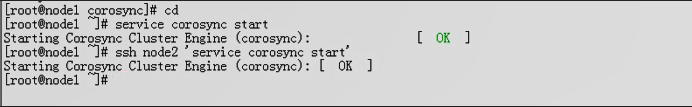

两个节点 启动 corosync

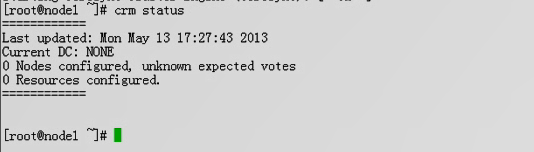

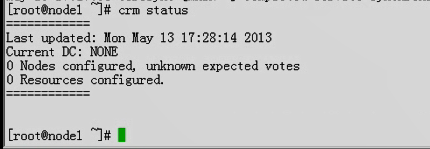

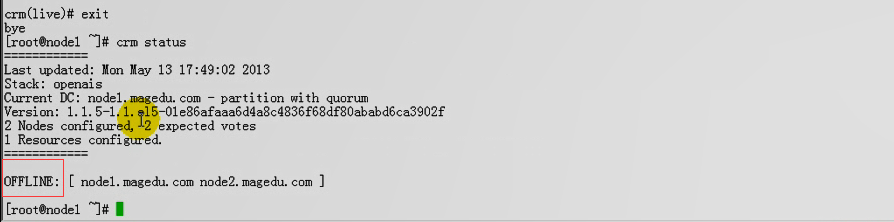

查看高可用 马哥这边有问题

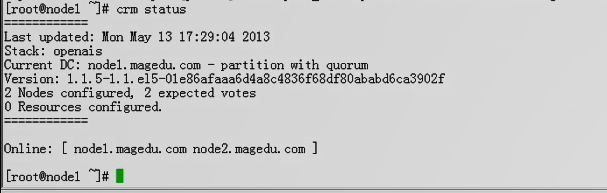

我这边还是用原来的版本,当然没有问题

[root@node1 ~]# service corosync start

Starting Corosync Cluster Engine (corosync): [确定]

[root@node1 ~]# ssh node2 'service corosync start'

Starting Corosync Cluster Engine (corosync): [确定]

[root@node1 ~]# crm status

============

Last updated: Mon Dec 21 16:38:45 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

2 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

资源好像没启动,再等等,资源就启动了

[root@node1 ~]# crm status

============

Last updated: Mon Dec 21 16:45:31 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

2 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

httpd (lsb:httpd): Started node1.magedu.com

webip (ocf::heartbeat:IPaddr): Started node1.magedu.com

[root@node1 ~]#

马哥这边有问题,看马哥的解决思路

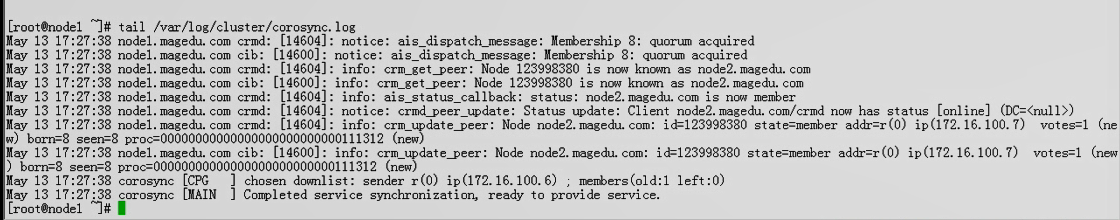

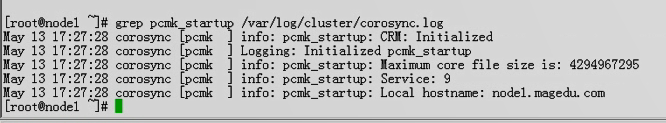

看日志

现在好了,证明,刚才是由于启动太慢的原因,如果稍微等一下就好了

下面的错,是跟STONITH设备相关的,不用关心

看看跟 corosync 引擎相关的,是否正常 包括启动和读取???一切正常 corosync服务没有问题

我先在第一个节点 192.168.0.45 上删除原来的资源,然后稍微改动一下配置文件

[root@node1 ~]# crm

crm(live)# resource

crm(live)resource# list

httpd (lsb:httpd) Started

webip (ocf::heartbeat:IPaddr) Started

crm(live)resource# httpd stop

ERROR: syntax: httpd stop

crm(live)resource# stop httpd

crm(live)resource# stop webip

crm(live)resource# cleanup httpd

Cleaning up httpd on node2.magedu.com

Cleaning up httpd on node1.magedu.com

Waiting for 3 replies from the CRMd...

crm(live)resource# cleanup webip

Cleaning up webip on node2.magedu.com

Cleaning up webip on node1.magedu.com

Waiting for 3 replies from the CRMd...

crm(live)resource#

crm(live)resource# cd

crm(live)# configure

crm(live)configure#

crm(live)configure# delete httpd

INFO: hanging colocation:httpd_with_webip deleted

INFO: hanging order:webip_before_httpd deleted

crm(live)configure# delete webip

INFO: hanging location:webip_on_node1 deleted

crm(live)configure#

crm(live)configure# verify

crm(live)configure# commit

crm(live)configure# show

node node1.magedu.com \

attributes standby="off"

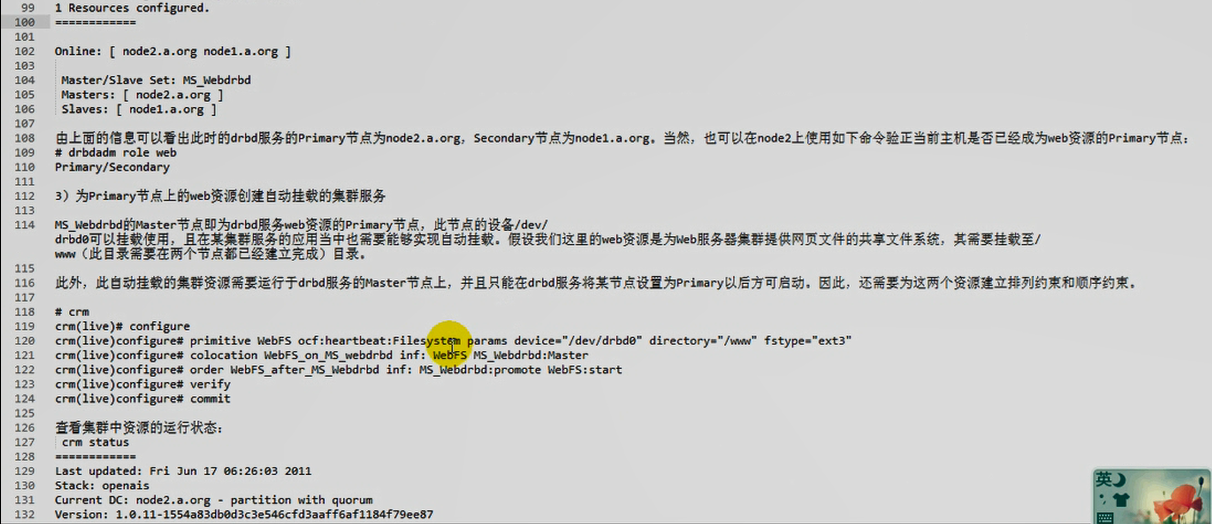

node node2.magedu.com \

attributes standby="off"

property $id="cib-bootstrap-options" \

dc-version="1.0.12-unknown" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore" \

last-lrm-refresh="1608540830"

rsc_defaults $id="rsc-options" \

resource-stickiness="200"

crm(live)configure# edit

node node1.magedu.com

node node2.magedu.com

property $id="cib-bootstrap-options" \

dc-version="1.0.12-unknown" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="200"

crm(live)configure# verify

crm(live)configure# commit

crm(live)configure#

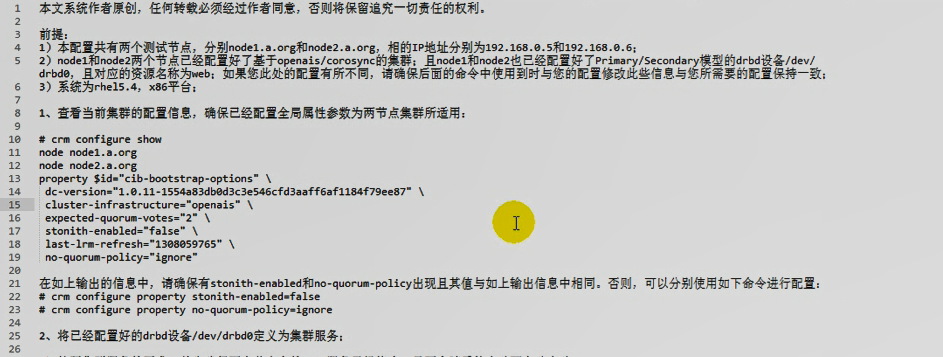

照着马哥的做吧

配置一下 corosync,,,

[root@node1 ~]# crm configure

crm(live)configure# verify

crm(live)configure#

crm(live)configure# property # 后面连续敲两个 Tab, 会显示所有可以配置的属性

batch-limit= pe-error-series-max=

cluster-delay= pe-input-series-max=

cluster-recheck-interval= pe-warn-series-max=

crmd-transition-delay= remove-after-stop=

dc-deadtime= shutdown-escalation=

default-action-timeout= start-failure-is-fatal=

default-resource-stickiness= startup-fencing=

election-timeout= stonith-action=

is-managed-default= stonith-enabled=

maintenance-mode= stonith-timeout=

no-quorum-policy= stop-all-resources=

node-health-green= stop-orphan-actions=

node-health-red= stop-orphan-resources=

node-health-strategy= symmetric-cluster=

node-health-yellow=

crm(live)configure# property stonith-enabled= #连续敲两个Tab,会显示帮助

stonith-enabled (boolean, [true]): #表示布尔值,,,,默认值是true

Failed nodes are STONITH'd

crm(live)configure# property stonith-enabled=false #禁用stonith

crm(live)configure# verify #验证

crm(live)configure# commit #提交

crm(live)configure# property no-quorum-policy=

no-quorum-policy (enum, [stop]): What to do when the cluster does not have quorum

What to do when the cluster does not have quorum Allowed values: stop, freeze, ignore, suicide(自杀,不用管)

crm(live)configure# property no-quorum-policy=ignore #法定票数不满足时忽略

crm(live)configure# verify

crm(live)configure# commit

crm(live)configure#

#更期望留在当前节点

crm(live)configure# rsc_defaults

allow-migrate= is-managed= resource-stickiness=

description= migration-threshold= restart-type=

failure-timeout= multiple-active= target-role=

interval-origin= priority=

crm(live)configure# rsc_defaults resource-stickiness=100 #给每一个资源默认100的粘性

crm(live)configure# verify

crm(live)configure# commit

crm(live)configure# show

node node1.magedu.com

node node2.magedu.com

property $id="cib-bootstrap-options" \

dc-version="1.0.12-unknown" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure#

对于drbd这个服务来讲,我们不需要在pacemaker中进行额外的配置,

我们只需要把drbd本身当作高可用的资源,定义成主从就可以了

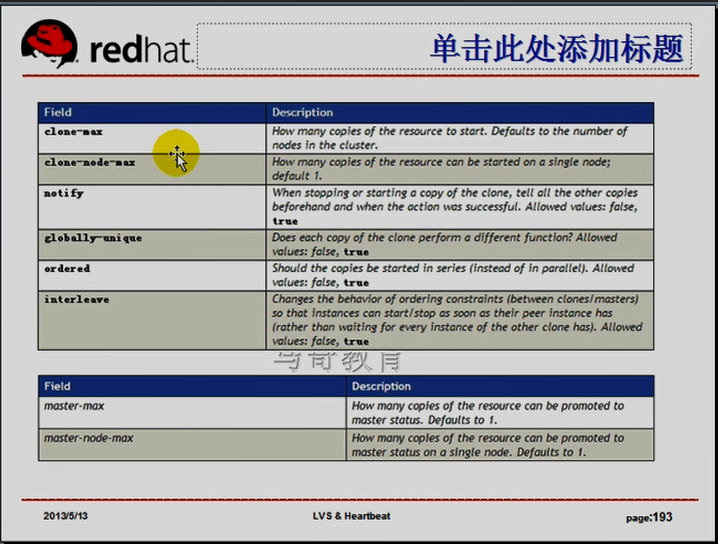

任何一个主从资源或克隆资源首先得是基本资源,,,还要配置为克隆类别的资源,只不过master/slave的克隆类型

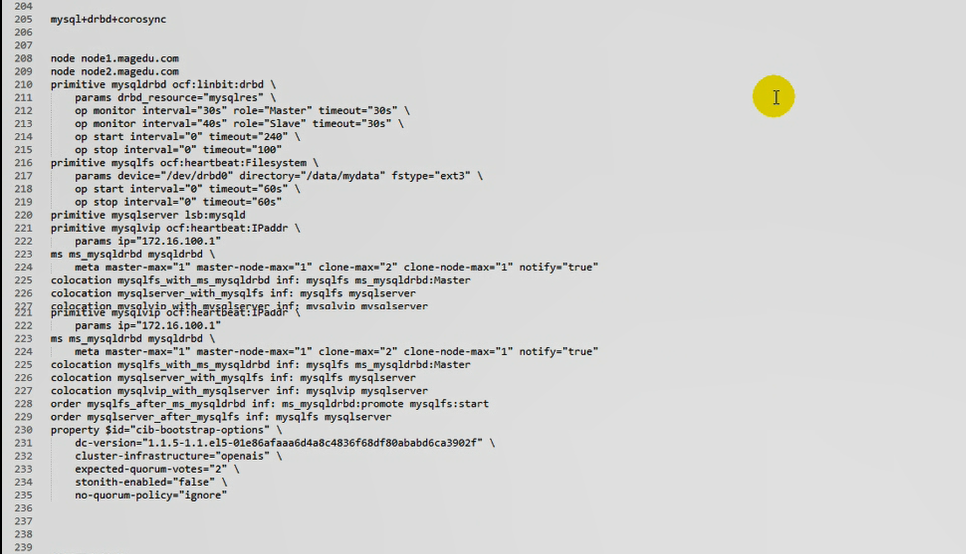

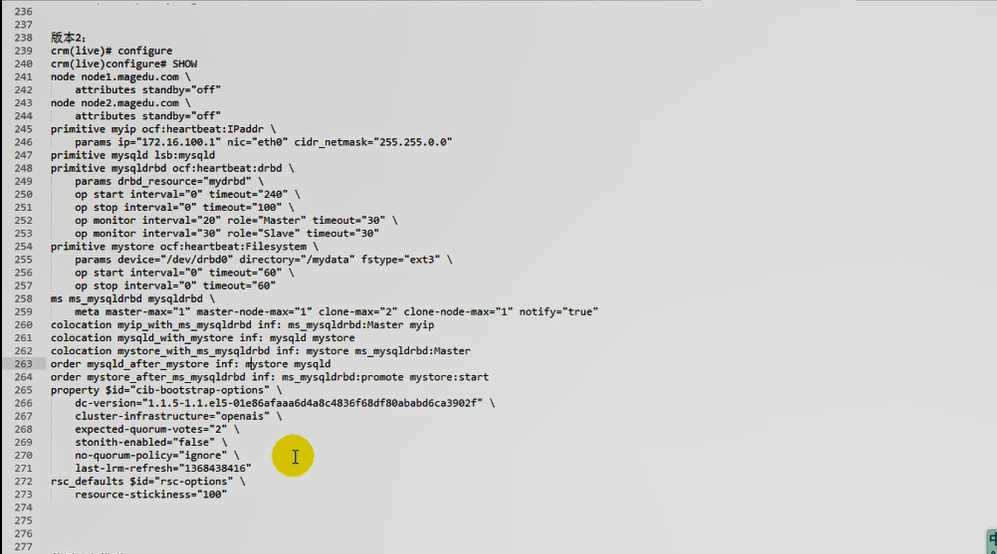

下面是我自己亲自做的,最终的版本:

crm(live)configure# show

node node1.magedu.com \

attributes standby="off"

node node2.magedu.com \

attributes standby="off"

primitive myip ocf:heartbeat:IPaddr \

params ip="192.168.0.50" nic="eth0" cidr_netmask="255.255.255.0"

primitive mysqld lsb:mysqld

primitive mysqldrbd ocf:heartbeat:drbd \

params drbd_resource="mydrbd" \

op start interval="0" timeout="240" \

op stop interval="0" timeout="100" \

op monitor interval="20" role="Master" timeout="30" \

op monitor interval="30" role="Slave" timeout="30"

primitive mystore ocf:heartbeat:Filesystem \

params device="/dev/drbd0" directory="/mydata3" fstype="ext3" \

op start interval="0" timeout="60" \

op stop interval="0" timeout="60"

ms ms_mysqldrbd mysqldrbd \

meta master-max="1" master-node-max="1" clone-max="2" clone-node-max="1" notify="true"

colocation myip_with_ms_mysqldrbd inf: ms_mysqldrbd:Master myip

colocation mysqld_with_mystore inf: mysqld mystore

colocation mystore_with_ms_mysqldrbd inf: mystore ms_mysqldrbd:Master

order mysqld_after_mystore inf: mystore mysqld

order mystore_after_ms_mysqldrbd inf: ms_mysqldrbd:promote mystore:start

property $id="cib-bootstrap-options" \

dc-version="1.0.12-unknown" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore" \

last-lrm-refresh="1608700798"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

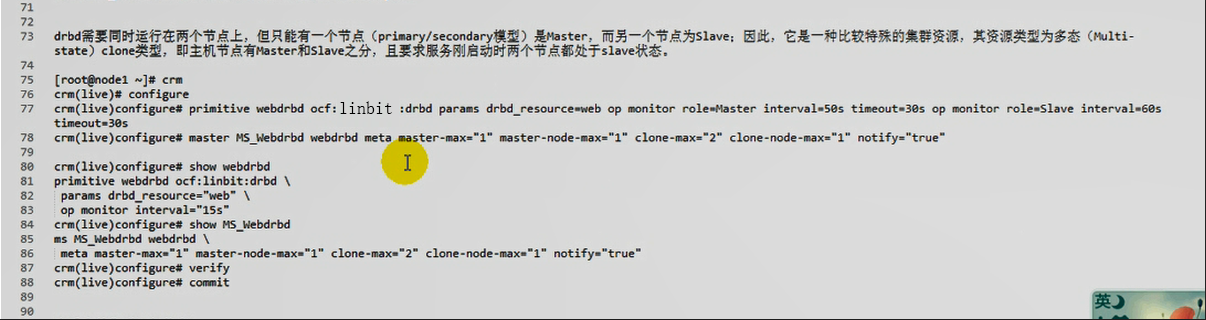

crm(live )configure# primitive webdrbd ocf:linbit:drbd params drbd_resource=web op monitor role=Master interval=50s timeout=30s op monitor role=Slave interval=60s timeout=30s

#定义一个primitive资源,起个名webdrbd,,,,,ocf:linbit:drbd,,, params drbd_resource=web 定义 参数的资源,,,, op 指定操作 op monitor role=Master interval=50s 监控操作,如果角色是主节点的话, 监控时间间隔是50秒,超时时间为30秒, op monitor role=Slave interval=60s timeout=30s 也是监控操作,如果角色是从节点的话,监控时间间隔是60秒,超时时间为30秒##########这个时间只能大于它建议的时间,不能小于建议的最小时间

#ocf:linbit:drbd ocf:heartbeat:drbd 其实基本上没有区别

crm(live)configure# master MS_Webdrbd webdrbd meta master-max="1" master-node-max="1" clone-max="2" clone-node max="1" notify="true"

#master 定义主从类型的资源,MS_Webdrbd 是主从类型的资源名称 webdrbd 是主资源,即primitive资源,,,这里表示MS_Webdrbd 是 webdrbd 的克隆 meta表示元属性信息,第一master-max="1" 最多允许出现一个主资源(不是双主模型,只能有一个为主) max-node-max="1" 表示这个主资源最多只能运行在一个节点上 clone-max="2"克隆最多数是2个,无论是主还是从,都是克隆 clone-node-max="1" 克隆节点最多数为1个,也就是从节点的个数 notify="true" 发生故障时,是不是向管理员发出通知(发出警告) notify="true"是一个op??

crm(live)configure# show webdrbd #show查看一下

crm(live)configure# show MS_Webdrbd #show查看一下

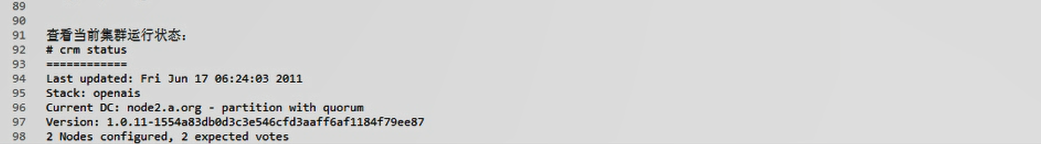

查看当前集群运行状态:

# crm status

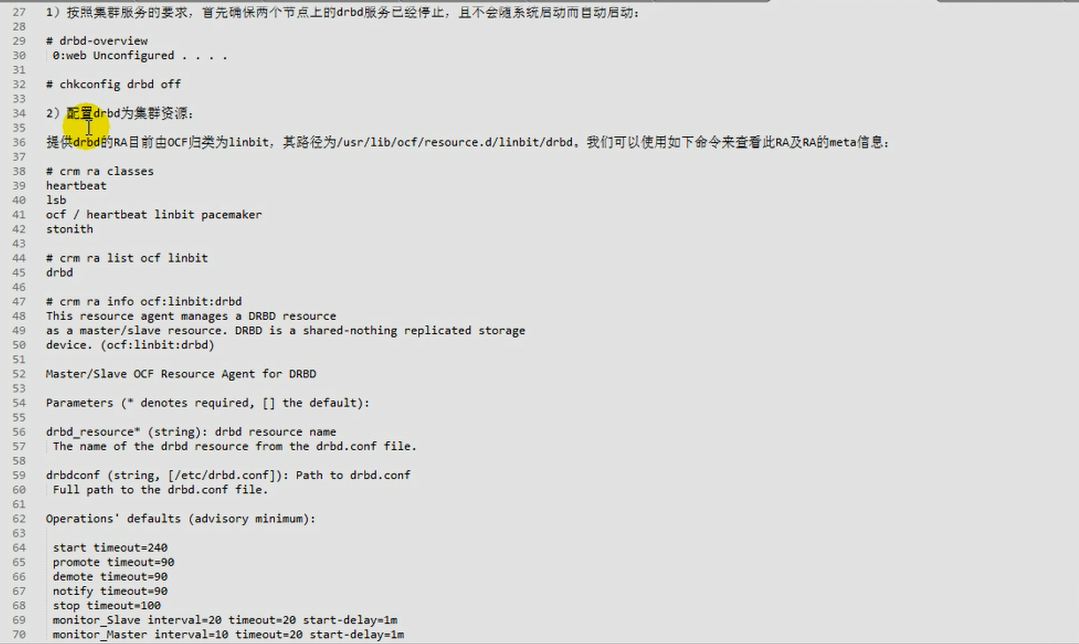

要想把drbd配置为资源,得有资源代理

在第一个节点 192.168.0.45 上

[root@node1 ~]# crm

crm(live)# ra

crm(live)ra# providers drbd

heartbeat linbit #heartbeat 和 linbit都提供了资源代理;;;;drbd就是linbit公司研发的;;;#这里随便用哪个提供者吧

crm(live)ra# classes #在classes里面的提供者多了一个linbit

heartbeat

lsb

ocf / heartbeat linbit pacemaker

stonith

crm(live)ra#

crm(live)ra# meta ocf:heartbeat:drbd #查看元数据 #heartbeat的

Manages a DRBD resource (deprecated) (ocf:heartbeat:drbd)

Deprecation warning: This agent is deprecated and may be removed from

a future release. See the ocf:linbit:drbd resource agent for a

supported alternative. --

This resource agent manages a Distributed

Replicated Block Device (DRBD) object as a master/slave

resource. DRBD is a mechanism for replicating storage; please see the

documentation for setup details.

Parameters (* denotes required, [] the default):

drbd_resource* (string, [drbd0]): drbd resource name #必须参数 drbd_resource drbd_资源

The name of the drbd resource from the drbd.conf file.

drbdconf (string, [/etc/drbd.conf]): Path to drbd.conf #drbd配置文件

Full path to the drbd.conf file.

clone_overrides_hostname (boolean, [no]): Override drbd hostname

Whether or not to override the hostname with the clone number. This can

be used to create floating peer configurations; drbd will be told to

use node_<cloneno> as the hostname instead of the real uname,

which can then be used in drbd.conf.

:

crm(live)ra# meta ocf:linbit:drbd #linbit的

Manages a DRBD device as a Master/Slave resource (ocf:linbit:drbd)

This resource agent manages a DRBD resource as a master/slave resource.

DRBD is a shared-nothing replicated storage device.

Note that you should configure resource level fencing in DRBD,

this cannot be done from this resource agent.

See the DRBD User's Guide for more information.

http://www.drbd.org/docs/applications/

Parameters (* denotes required, [] the default):

drbd_resource* (string): drbd resource name #与 上面heartbeat一样

The name of the drbd resource from the drbd.conf file.

drbdconf (string, [/etc/drbd.conf]): Path to drbd.conf

Full path to the drbd.conf file.

stop_outdates_secondary (boolean, [false]): outdate a secondary on stop

Recommended setting: until pacemaker is fixed, leave at default (disabled).

Note that this feature depends on the passed in information in

OCF_RESKEY_CRM_meta_notify_master_uname to be correct, which unfortunately is

not reliable for pacemaker versions up to at least 1.0.10 / 1.1.4.

If a Secondary is stopped (unconfigured), it may be marked as outdated in the

drbd meta data, if we know there is still a Primary running in the cluster.

Note that this does not affect fencing policies set in drbd config,

but is an additional safety feature of this resource agent only.

You can enable this behaviour by setting the parameter to true.

If this feature seems to not do what you expect, make sure you have defined

fencing policies in the drbd configuration as well.

Operations' defaults (advisory minimum): #我们默认给它的操作时间比下面给出的建议时间可能还要短;;;;如果不修改时间的话,会有警告的

start timeout=240 #启动时间超时为 240 秒

promote timeout=90 #promote ( 提升为某种模式,比如提升为主节点,自己准备好扮演服务的角色 )超时为 90 秒

demote timeout=90 #demote ( 把自己当前扮演的这种角色给它去了 ) 超时为 90 秒

notify timeout=90 #一旦发现主从节点切换了,应该向管理员通知一下这里面可能出故障了,因为它跟存储相关,所以它还有通知机制

stop timeout=100 #停止时间超时为 100 秒

monitor_Slave interval=20 timeout=20 #监控从节点 20秒监控一次

monitor_Master interval=10 timeout=20 #监控主节点 10秒监控一次

在第一个节点 192.168.0.45 上

[root@node1 ~]# crm

crm(live)# configure

Signon to CIB failed: connection failed

Init failed, could not perform requested operations

ERROR: cannot parse xml: no element found: line 1, column 0

crm(live)configure# exit

bye

上面报错 ,我启动 corosync 看看吧

[root@node1 ~]# service corosync start

Starting Corosync Cluster Engine (corosync): [确定]

[root@node1 ~]# ssh node2 'service corosync start'

Starting Corosync Cluster Engine (corosync): [确定]

[root@node1 ~]# crm

crm(live)# configure

crm(live)configure#

crm(live)configure# primitive mydrbd ocf:linbit:drbd params drbd_resource=mydrbd #第一个mydrbd是ha的资源名(corosync的资源名) ocf:linbit:drbd 是 资源代理 params 参数关键字 drbd_resource=mydrbd 即drbd的资源叫mydrbd

WARNING: mydrbd: default timeout 20s for start is smaller than the advised 240

WARNING: mydrbd: default timeout 20s for stop is smaller than the advised 100

#警告默认超时时间(启动时间)为20秒,而建议值为 240 秒

#警告默认超时时间(停止时间)为20秒,而建议值为 100 秒

#不改超时时间值的话,它会发出警告的

crm(live)configure#

crm(live)configure# show

node node1.magedu.com

node node2.magedu.com

primitive mydrbd ocf:linbit:drbd \

params drbd_resource="mydrbd"

property $id="cib-bootstrap-options" \

dc-version="1.0.12-unknown" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure#

crm(live)configure# cd #进入根

There are changes pending. Do you want to commit them? n #不提交保存

crm(live)# configure

crm(live)configure# show #此时没有关于 mydrbd 的配置了

node node1.magedu.com

node node2.magedu.com

property $id="cib-bootstrap-options" \

dc-version="1.0.12-unknown" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure#

crm(live)configure# primitive mydrbd ocf:linbit:drbd params drbd_resource=mydrbd op start timeout=240 op stop timeout=100 op monitor role=Master interval=10s timeout=20s op monitor role=Slave interval=20s timeout=20 #重新定义mydrbd的ha资源 # op start timeout=240,, 不能小于的是 240,就给240;;;;op stop timeout=100,,不能小于的是100,就给100

#还要做其它的 op monitor role=Master interval=10s timeout=20s 主节点的监控,时间间隔是多少,超时时间是多少 秒的话s写不写都行

# op monitor role=Slave interval=20s timeout=20s 从节点的监控,时间间隔是多少,超时时间是多少

crm(live)configure#

crm(live)configure# show

node node1.magedu.com

node node2.magedu.com

primitive mydrbd ocf:linbit:drbd \

params drbd_resource="mydrbd" \

op start interval="0" timeout="240" \

op stop interval="0" timeout="100" \

op monitor interval="10s" role="Master" timeout="20s" \

op monitor interval="20s" role="Slave" timeout="20"

property $id="cib-bootstrap-options" \

dc-version="1.0.12-unknown" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# verify #验证一下 #此时不要急着提交,因为还要定义成主从类型

crm(live)configure#

crm(live)configure# help ms 或者(ms 或者 master命令 本质上是 master-slave主从的意思)

The `ms` command creates a master/slave resource type. It may contain a

single primitive resource or one group of resources.

Usage:

...............

ms <name> <rsc> #这是一个主从资源 <name>是主从资源名称 <rsc> 表示把哪个资源做成主从的

[meta attr_list]

[params attr_list]

attr_list :: [$id=<id>] <attr>=<val> [<attr>=<val>...] | $id-ref=<id>

...............

Example:

...............

ms disk1 drbd1 \

meta notify=true globally-unique=false #meta的某些值 notify表示通知为真 globally-unique表示是否是全局唯一的名称

............... # 还有这些属性 master-max="1" master-node-max="1" clone-max="2" clone-node max="1" notify="true"

.Note on `id-ref` usage

****************************

Instance or meta attributes (`params` and `meta`) may contain

a reference to another set of attributes. In that case, no other

:

crm(live)configure# ms ms_mydrbd mydrbd meta master-max=1 master-node-max=1 clone-max=2 clone-node-max=1 #ms_mydrbd 是主从资源名 mydrbd是原来的资源名称 ;;;;把mydrbd做成主从,主从的资源名称是ms_mydrbd meta元数据属性,master-max=1,最多一个主资源 master-node-max=1 最多一个主节点 clone-max=2最多2个克隆 clone-node-max=1 克隆节点最多数为1个,也就是从节点的个数(其实可以有两个的),,,,它指的不是一共可以有多少个节点可以运行从资源,而是指每个节点上可以运行多少个资源(虽然有两个克隆资源,但是每个节点上只能启动一个)

notify=true 当一个克隆资源启动或停止时,告诉别的克隆资源,我来了或我走了,,默认为true,不用改,不用定义

globally-unique=true 每一个克隆资源是不是必须全局唯一的,跟其它的克隆资源功能不一样 对于主从资源 ms (master/slave)来讲,是的确与其它人不一样,默认是true,不用改,不用定义

crm(live)configure# verify #检验

crm(live)configure# commit #提交

crm(live)configure#

crm(live)configure# show

node node1.magedu.com

node node2.magedu.com

primitive mydrbd ocf:linbit:drbd \ #两个资源,一个是primitive

params drbd_resource="mydrbd" \

op start interval="0" timeout="240" \

op stop interval="0" timeout="100" \

op monitor interval="10s" role="Master" timeout="20s" \

op monitor interval="20s" role="Slave" timeout="20"

ms ms_mydrbd mydrbd \ #一个是主从

meta master-max="1" master-node-max="1" clone-max="2" clone-node-max="1"

property $id="cib-bootstrap-options" \

dc-version="1.0.12-unknown" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure#

crm(live)configure# cd

crm(live)# status

============

Last updated: Wed Dec 23 12:28:30 2020

Stack: openais

Current DC: node2.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

1 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mydrbd

mydrbd:0 (ocf::linbit:drbd): Slave node2.magedu.com (unmanaged) FAILED

Stopped: [ mydrbd:1 ]

Failed actions: #我这个好像也有问题

mydrbd:0_start_0 (node=node2.magedu.com, call=3, rc=6, status=complete): not configured

mydrbd:0_stop_0 (node=node2.magedu.com, call=4, rc=6, status=complete): not configured

crm(live)#

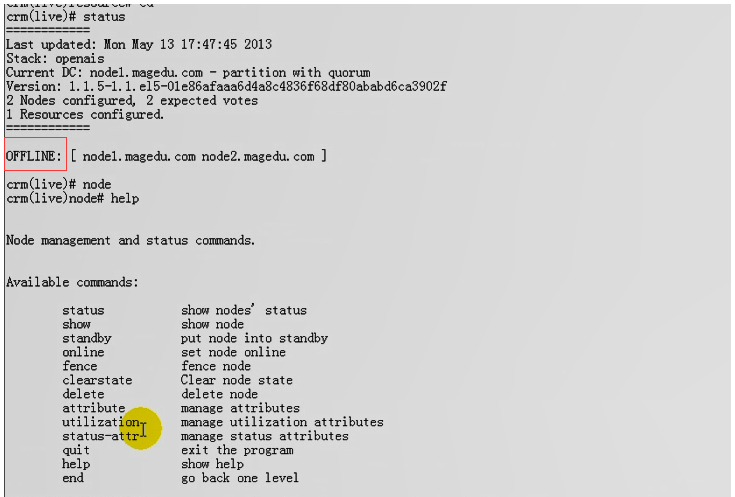

马哥发现离线了

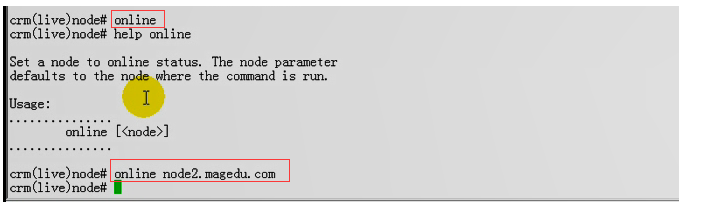

马哥让节点上线

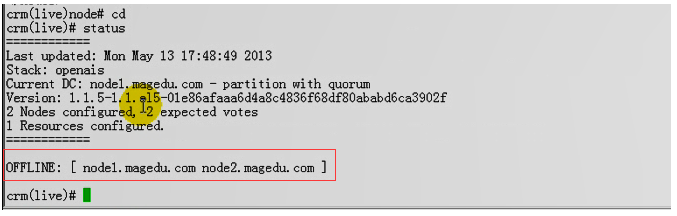

还是离线 OFFLINE

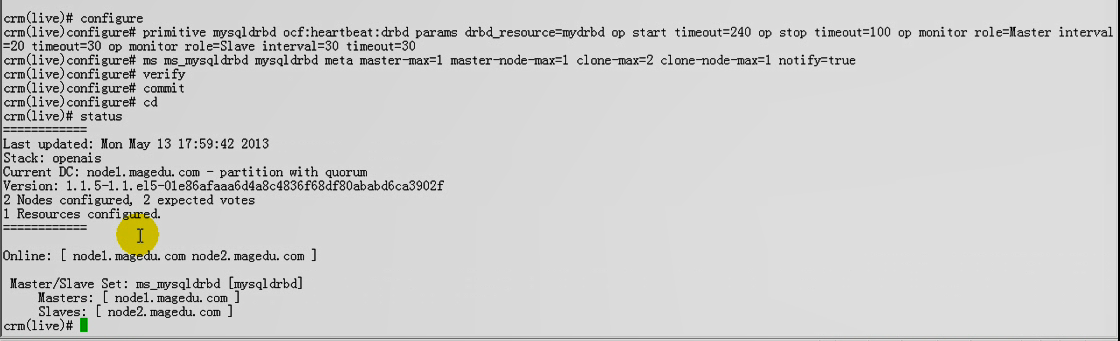

马哥换 ocf:heartbeat:drbd 资源代理 试试,并且把资源不使用mydrbd了(上面配置的高可用集群的资源名mydrbd与drbd的资源名相同,都是mydrbd)

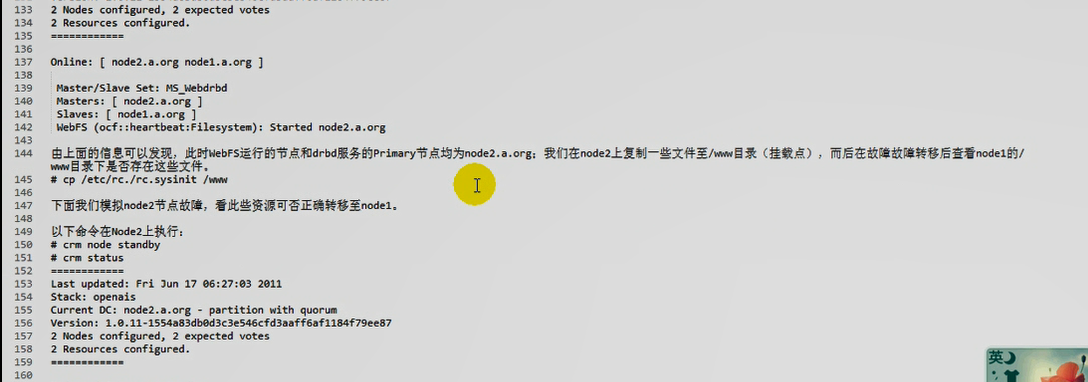

由下图,此时可以了

我也跟着马哥做吧,,

在第一个节点 192.168.0.45 上

第一步停止,清理资源

crm(live)resource# stop mydrbd

crm(live)resource# stop ms_mydrbd

crm(live)resource# commit

crm(live)resource# cleanup ms_mydrbd

Cleaning up mydrbd:0 on node2.magedu.com

Cleaning up mydrbd:0 on node1.magedu.com

Cleaning up mydrbd:1 on node2.magedu.com

Cleaning up mydrbd:1 on node1.magedu.com

Waiting for 5 replies from the CRMd.....

crm(live)resource# cleanup mydrbd

第二步删除资源

crm(live)configure# delete ms_mydrbd

crm(live)configure# delete mydrbd

crm(live)configure# verify

crm(live)configure# commit

WARNING: CIB changed in the meantime: won't touch it!

Do you still want to commit? y

crm(live)configure# show

node node1.magedu.com

node node2.magedu.com

property $id="cib-bootstrap-options" \

dc-version="1.0.12-unknown" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore" \

last-lrm-refresh="1608700798"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure#

crm(live)configure# primitive mysqldrbd ocf:heartbeat:drbd params drbd_resource=mydrbd op start timeout=240 op stop timeout=100 op monitor role=Master interval=20 timeout=30 op monitor role=Slave interval=30 timeout=30

crm(live)configure#

crm(live)configure# ms ms_mysqldrbd mysqldrbd meta master-max=1 master-node-max=1 clone-max=2 clone-node-max=1 notify=true

crm(live)configure# verify

crm(live)configure# commit

crm(live)configure# status #现在正常了 (到底是linbit代理的问题,还是重名的问题;;;;最大可能是高可用资源名与drbd的名称重名导致的吧)

ERROR: syntax: status

crm(live)configure# cd

crm(live)# status

============

Last updated: Wed Dec 23 13:31:04 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

1 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node2.magedu.com ] # node2是主节点

Slaves: [ node1.magedu.com ]

crm(live)configure# exit

bye

[root@node1 ~]# drbd-overview #确认一下吧 #节点一从,节点二主

0:mydrbd Connected Secondary/Primary UpToDate/UpToDate C r-----

[root@node1 ~]#

在第二个节点 192.168.0.55 上

[root@node2 ~]# drbd-overview #节点一从,节点二主

0:mydrbd Connected Primary/Secondary UpToDate/UpToDate C r-----

[root@node2 ~]#

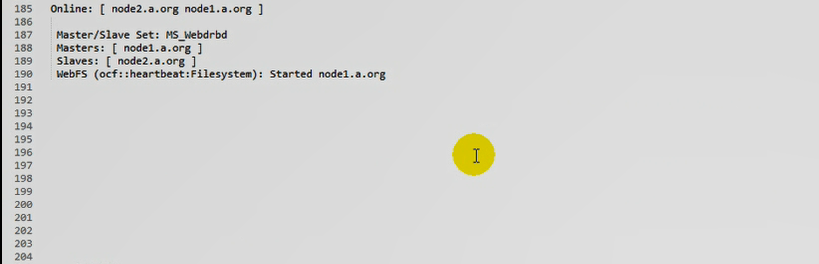

此时让node2 成为standby,原来的slave(即node1)成为master;;;;;原来的master(即node2)下线,它是不会成为slave的,,,等会儿重新上线,它才会成为slave的

在第二个节点 192.168.0.55 上

[root@node2 ~]# crm node standby

[root@node2 ~]# crm status

============

Last updated: Wed Dec 23 14:22:39 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

1 Resources configured.

============

Node node2.magedu.com: standby

Online: [ node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node1.magedu.com ] #node1成为主节点了

Stopped: [ mysqldrbd:0 ] # 这一个节点停止了

[root@node2 ~]#

[root@node2 ~]# crm node online #重新上线

[root@node2 ~]# crm status

============

Last updated: Wed Dec 23 14:24:28 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

1 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node1.magedu.com ] #此时仍然是node1是主节点

Slaves: [ node2.magedu.com ] #node2变成从节点了

[root@node2 ~]#

[root@node2 ~]# drbd-overview

0:mydrbd Connected Secondary/Primary UpToDate/UpToDate C r-----

[root@node2 ~]#

在第一个节点 192.168.0.45 上 (此时node1是主节点)

[root@node1 ~]# ls /mydata3 #drbd的挂载点没有数据,说明drbd还没有挂载

[root@node1 ~]#

所以把drbd定义成高可用资源,最多只能保证主从资源的切换,并不能保证能会挂载drbd文件系统

在第一个节点 192.168.0.45 上

crm(live)configure# primitive mystore ocf:heartbeat:Filesystem params device=/dev/drbd0 directory=/mydata3 fstype=ext3 #mystore 是资源名 ocf:heartbeat:Filesystem是资源代理 params是参数 device,directory,fstype这些不用解释了吧 fstype就是文件系统类型

WARNING: mystore: default timeout 20s for start is smaller than the advised 60

WARNING: mystore: default timeout 20s for stop is smaller than the advised 60

#警告,建议的最小值是60秒,而默认值是20秒

crm(live)configure# edit #直接edit吧

node node1.magedu.com

node node2.magedu.com \

attributes standby="off"

primitive mysqldrbd ocf:heartbeat:drbd \

params drbd_resource="mydrbd" \

op start interval="0" timeout="240" \

op stop interval="0" timeout="100" \

op monitor interval="20" role="Master" timeout="30" \

op monitor interval="30" role="Slave" timeout="30"

primitive mystore ocf:heartbeat:Filesystem \

params device="/dev/drbd0" directory="/mydata3" fstype="ext3" #删掉这一行吧

ms ms_mysqldrbd mysqldrbd \

meta master-max="1" master-node-max="1" clone-max="2" clone-node-max="1" notify="true"

property $id="cib-bootstrap-options" \

dc-version="1.0.12-unknown" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore" \

last-lrm-refresh="1608700798"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# edit #删掉mystore 之后

node node1.magedu.com

node node2.magedu.com \

attributes standby="off"

primitive mysqldrbd ocf:heartbeat:drbd \

params drbd_resource="mydrbd" \

op start interval="0" timeout="240" \

op stop interval="0" timeout="100" \

op monitor interval="20" role="Master" timeout="30" \

op monitor interval="30" role="Slave" timeout="30"

ms ms_mysqldrbd mysqldrbd \

meta master-max="1" master-node-max="1" clone-max="2" clone-node-max="1" notify="true"

property $id="cib-bootstrap-options" \

dc-version="1.0.12-unknown" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore" \

last-lrm-refresh="1608700798"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# primitive mystore ocf:heartbeat:Filesystem params device=/dev/drbd0 directory=/mydata3 fstype=ext3 op start timeout=60 op stop timeout=60 #重新定义 mystore

crm(live)configure#

crm(live)configure# verify #验证一下,千万不能提交,,,,,因为主节点挂载,所以要确保filesystem与主节点在一起,所以要配置排列约束

crm(live)configure#

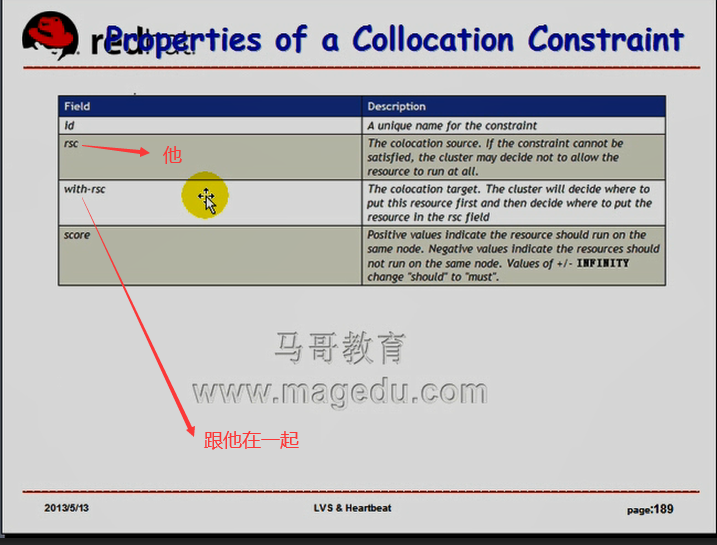

crm(live)configure# colocation mystore_with_ms_mysqldrbd inf:mystore ms_mysqldrbd:Master #建立排列约束关系 mystore要与ms_mysqldrbd的主节点在一起

ERROR: syntax in colocation: colocation mystore_with_ms_mysqldrbd inf:mystore ms_mysqldrbd:Master #报错,因为inf: 冒号后面要有空格

crm(live)configure# colocation mystore_with_ms_mysqldrbd inf: mystore ms_mysqldrbd:Master

crm(live)configure#

crm(live)configure# order mystore_after_ms_mysqldrbd mandatory: ms_mysqldrbd:promote mystore:start # 要有先后次序,必须要先成为主节点后,才能挂载,所以要有order约束 inf 好像写成 mandatory 也可以(这条命令就是写的是 mandatory ) ms_mysqldrbd:promote 主节点要先启动起来,先准备好,要己经完成切换才可以 然后mystore才能start

crm(live)configure#

crm(live)configure# verify #验证

crm(live)configure# commit #提交

crm(live)configure#

crm(live)# status

============

Last updated: Wed Dec 23 15:54:09 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

2 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node1.magedu.com ] #Masters在node1上

Slaves: [ node2.magedu.com ]

mystore (ocf::heartbeat:Filesystem): Started node1.magedu.com #所以mystore也在node1上

crm(live)#

[root@node1 ~]# ls /mydata3 #看到内容了,说明挂载了

inittab lost+found

#此时让node1下线,资源就将会切换到node2上,并且node2会挂载

[root@node1 ~]#

在第一个节点 192.168.0.45 上

[root@node1 ~]# crm node standby # 当前命令行所在的节点转为备用

[root@node1 ~]#

[root@node1 ~]# crm status

============

Last updated: Wed Dec 23 15:57:43 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

2 Resources configured.

============

Node node1.magedu.com: standby

Online: [ node2.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node2.magedu.com ] #主节点node2

Stopped: [ mysqldrbd:1 ]

mystore (ocf::heartbeat:Filesystem): Started node2.magedu.com #挂载的也是node2

[root@node1 ~]#

在第二个节点 192.168.0.55 上

[root@node2 ~]# ls /mydata3 #看到内容了,,,说明切换成功了吧

inittab lost+found

[root@node2 ~]#

在第一个节点 192.168.0.45 上

[root@node1 ~]# crm node online # 让node1重新上线

[root@node1 ~]# crm status

============

Last updated: Wed Dec 23 16:03:55 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

2 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node2.magedu.com ]

Slaves: [ node1.magedu.com ] #现在node1成为slave了,它不用挂载了

mystore (ocf::heartbeat:Filesystem): Started node2.magedu.com

[root@node1 ~]#

现在有个公共存储了,而且这个存储会随着 哪个节点故障以后,它会自动切换的

我们准备定义一个mysql服务器,让mysql服务器运行在主节点上,万一主节点故障了,让mysql运行在原来的从节点上(此时从节点成为主节点了),,,原来的从节点会得到与原来的主节点一模一样的数据

这样子,就算完成节点切换,mysql从另外一个节点启动服务的时候,读到的数据与以前一模一样

在第二个节点 192.168.0.55 上

现在主节点是node2 下面几步的步骤 是把 crm 由把主节点转为备用,再上线,,,,,最后看到的结果是主节点转到另外一个节点(node1),

[root@node2 ~]# crm node standby

[root@node2 ~]# crm status

============

Last updated: Wed Dec 23 16:10:35 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

2 Resources configured.

============

Node node2.magedu.com: standby

Online: [ node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node1.magedu.com ]

Stopped: [ mysqldrbd:0 ]

mystore (ocf::heartbeat:Filesystem): Started node1.magedu.com

[root@node2 ~]# crm node online

[root@node2 ~]# crm status

============

Last updated: Wed Dec 23 16:10:44 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

2 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node1.magedu.com ]

Slaves: [ node2.magedu.com ]

mystore (ocf::heartbeat:Filesystem): Started node1.magedu.com

[root@node2 ~]#

通过上面现在看到 主节点是node1

在第一个节点 192.168.0.45 上

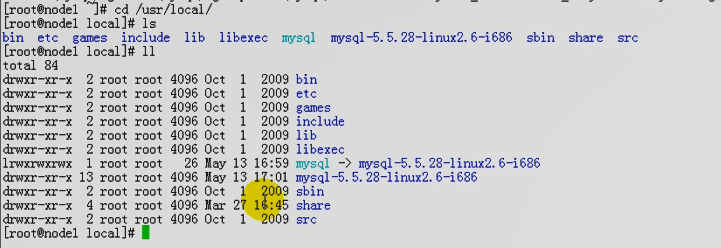

马哥在 node1 完成mysql的安装和配置,我这边以前己经弄好了

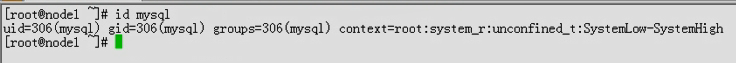

mysql用户有

mysql程序也安装好了

mysql目录 ,mysql 的 data目录的属主属组也是对的

[root@node1 ~]# id mysql

uid=306(mysql) gid=306(mysql) groups=306(mysql) context=root:system_r:unconfined_t:SystemLow-SystemHigh

[root@node1 ~]# cd /usr/local/

[root@node1 local]# ls

apache bin etc lib mysql php src

apr courier-authlib games libexec mysql-5.5.28-linux2.6-i686 sbin

apr-util doc include man mysql-5.6.10-linux-glibc2.5-i686 share

[root@node1 local]# ll

总计 152

drwxr-xr-x 13 root root 4096 2019-07-12 apache

drwxr-xr-x 6 root root 4096 2019-07-12 apr

drwxr-xr-x 5 root root 4096 2019-07-12 apr-util

drwxr-xr-x 2 root root 4096 09-15 10:06 bin

drwxr-xr-x 9 root root 4096 07-04 08:26 courier-authlib

drwxr-xr-x 3 root root 4096 09-15 10:06 doc

drwxr-xr-x 2 root root 4096 2009-10-01 etc

drwxr-xr-x 2 root root 4096 2009-10-01 games

drwxr-xr-x 2 root root 4096 2009-10-01 include

drwxr-xr-x 2 root root 4096 2009-10-01 lib

drwxr-xr-x 2 root root 4096 2009-10-01 libexec

drwxr-xr-x 5 root root 4096 2020-05-06 man

drwxr-xr-x 13 mysql mysql 4096 12-23 08:27 mysql

drwxr-xr-x 13 root root 4096 2019-07-12 mysql-5.5.28-linux2.6-i686

drwxr-xr-x 13 root mysql 4096 2019-07-13 mysql-5.6.10-linux-glibc2.5-i686

drwxr-xr-x 9 root root 4096 2019-07-13 php

drwxr-xr-x 2 root root 4096 2009-10-01 sbin

drwxr-xr-x 7 root root 4096 09-15 10:06 share

drwxr-xr-x 2 root root 4096 2009-10-01 src

[root@node1 local]#

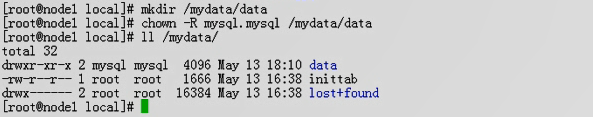

[root@node1 local]# mkdir /mydata/data/

mkdir: 无法创建目录 “/mydata/data/”: 文件已存在

[root@node1 local]#

[root@node1 local]# ll /mydata

总计 8

drwxr-xr-x 2 mysql mysql 4096 10-24 18:08 data

[root@node1 local]#

事实上我的mysql目录应该是 /mydata3/data吧

[root@node1 local]# mkdir /mydata3/data

[root@node1 local]# chown -R mysql:mysql /mydata3/data

[root@node1 local]#

[root@node1 local]# ll /mydata3/

总计 32

drwxr-xr-x 2 mysql mysql 4096 12-23 16:32 data

-rw-r--r-- 1 root root 1666 12-19 10:23 inittab

drwx------ 2 root root 16384 12-19 09:59 lost+found

[root@node1 local]# pwd

/usr/local

[root@node1 local]# cd mysql

[root@node1 mysql]# pwd

/usr/local/mysql

[root@node1 mysql]# scripts/mysql_install_db --user=mysql --datadir=/mydata3/data #初始化mysql吧

Installing MySQL system tables...

OK

Filling help tables...

OK

To start mysqld at boot time you have to copy

support-files/mysql.server to the right place for your system

PLEASE REMEMBER TO SET A PASSWORD FOR THE MySQL root USER !

To do so, start the server, then issue the following commands:

./bin/mysqladmin -u root password 'new-password'

./bin/mysqladmin -u root -h node1.magedu.com password 'new-password'

Alternatively you can run:

./bin/mysql_secure_installation

which will also give you the option of removing the test

databases and anonymous user created by default. This is

strongly recommended for production servers.

See the manual for more instructions.

You can start the MySQL daemon with:

cd . ; ./bin/mysqld_safe &

You can test the MySQL daemon with mysql-test-run.pl

cd ./mysql-test ; perl mysql-test-run.pl

Please report any problems with the ./bin/mysqlbug script!

改一下配置文件

[root@node1 mysql]# vim /etc/my.cnf

...................

[mysqld]

...................

datadir = /mydata3/data

...................

[root@node1 mysql]# service mysqld restart #有问题,咋办?

MySQL server PID file could not be found! [失败]

Starting MySQL..The server quit without updating PID file ([失败]a3/data/node1.magedu.com.pid).

[root@node1 mysql]#

[root@node1 mysql]# mysql -u root -p #报错 不能连接

Enter password:

ERROR 2002 (HY000): Can't connect to local MySQL server through socket '/usr/local/mysql/mysql.sock' (2)

[root@node1 mysql]#

[root@node1 mysql]# tail /mydata3/data/node1.magedu.com.err #查看日志,,大意是有另外一个mysql服务器

201223 16:49:37 [Note] Server socket created on IP: '0.0.0.0'.

201223 16:49:37 [ERROR] Can't start server: Bind on TCP/IP port: Address already in use

201223 16:49:37 [ERROR] Do you already have another mysqld server running on port: 3306 ?

201223 16:49:37 [ERROR] Aborting

201223 16:49:37 InnoDB: Starting shutdown...

201223 16:49:38 InnoDB: Shutdown completed; log sequence number 1595675

201223 16:49:38 [Note] /usr/local/mysql/bin/mysqld: Shutdown complete

201223 16:49:38 mysqld_safe mysqld from pid file /mydata3/data/node1.magedu.com.pid ended

[root@node1 mysql]#

[root@node1 mysql]# netstat -tnlp | grep mysql

tcp 0 0 0.0.0.0:3306 0.0.0.0:* LISTEN 4526/mysqld

[root@node1 mysql]# kill 4526 #杀死这个进程

[root@node1 mysql]#

[root@node1 mysql]# service mysqld restart #启动成功了

MySQL server PID file could not be found! [失败]

Starting MySQL.. [确定]

[root@node1 mysql]#

[root@node1 mysql]# cat ~/.my.cnf

[client]

user=root

password=zhong1926

host=localhost

[root@node1 mysql]# mysql #默认使用 /root/.my.cnf里面的用户名和密码

ERROR 1045 (28000): Access denied for user 'root'@'localhost' (using password: YES)

[root@node1 mysql]#

[root@node1 mysql]# mv ~/.my.cnf ~/.my.cnf-bak #重命名它吧,就不受影响了

[root@node1 mysql]# mysql

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 2

Server version: 5.5.28-log Source distribution

Copyright (c) 2000, 2011, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mysql |

| performance_schema |

| test |

+--------------------+

4 rows in set (0.00 sec)

mysql> create database mydb; #创建一个新的数据库

Query OK, 1 row affected (0.00 sec)

mysql> exit

Bye

[root@node1 mysql]#

mysql不能开机自启动,因为我们要配置mysql成为高可用集群的资源

[root@node1 mysql]# service mysqld stop

Shutting down MySQL. [确定]

[root@node1 mysql]# chkconfig mysqld off

[root@node1 mysql]#

然后 我们完成资源切换 ,资源转移

[root@node1 mysql]# crm node standby #node1转为备用

[root@node1 mysql]# crm status

============

Last updated: Wed Dec 23 17:08:00 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

2 Resources configured.

============

Node node1.magedu.com: standby

Online: [ node2.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node2.magedu.com ] #master在node2上了

Stopped: [ mysqldrbd:1 ]

mystore (ocf::heartbeat:Filesystem): Started node2.magedu.com #挂载在node2上了

[root@node1 mysql]#

[root@node1 mysql]# crm node online #node1上线

[root@node1 mysql]# crm status

============

Last updated: Wed Dec 23 17:10:05 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

2 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd #确保node2成为主的了

Masters: [ node2.magedu.com ]

Slaves: [ node1.magedu.com ]

mystore (ocf::heartbeat:Filesystem): Started node2.magedu.com

[root@node1 mysql]#

我们还要先确保mysql在node2上服务也要正常

在第二个节点 192.168.0.55 上 配置,安装mysql

[root@node2 ~]# id mysql #mysql用户的id号也是与node1上一样的 确保gid,uid都要一样,因为要往同一个共享上写数据的

uid=306(mysql) gid=306(mysql) groups=306(mysql) context=root:system_r:unconfined_t:SystemLow-SystemHigh

[root@node2 ~]#

mysql己经安装好了

[root@node2 ~]# cd /usr/local/mysql

[root@node2 mysql]# ll

总计 172

drwxr-xr-x 2 mysql mysql 4096 09-15 12:27 bin

-rw-r--r-- 1 mysql mysql 17987 2012-08-29 COPYING

drwxr-xr-x 4 mysql mysql 4096 09-15 14:05 data

drwxr-xr-x 2 mysql mysql 4096 09-15 13:52 docs

drwxr-xr-x 3 mysql mysql 4096 09-15 12:26 include

-rw-r--r-- 1 mysql mysql 7604 2012-08-29 INSTALL-BINARY

drwxr-xr-x 3 mysql mysql 4096 09-15 12:27 lib

drwxr-xr-x 4 mysql mysql 4096 09-15 12:27 man

srwxrwxrwx 1 mysql mysql 0 12-22 09:27 mysql.sock

drwxr-xr-x 10 mysql mysql 4096 09-15 12:27 mysql-test

-rw-r--r-- 1 mysql mysql 2552 2012-08-29 README

drwxr-xr-x 2 mysql mysql 4096 09-15 12:27 scripts

drwxr-xr-x 27 mysql mysql 4096 09-15 12:27 share

drwxr-xr-x 4 mysql mysql 4096 09-15 12:27 sql-bench

drwxr-xr-x 2 mysql mysql 4096 09-15 12:27 support-files

-rw-r--r-- 1 root root 31940 09-19 18:16 test.txt

[root@node2 mysql]#

#mysql不用初始化了

把 node2上的 ~/.my.cnf 也重命名下吧 #默认使用 /root/.my.cnf里面的用户名和密码

[root@node1 mysql]# ssh node2 'mv ~/.my.cnf ~/.my.cnf-bak'

[root@node2 mysql]# vim /etc/my.cnf #改下配置文件 把my.cnf放在共享存储上就不用改两遍了,建议在生产环境中用的时候,把配置文件放在共享存储上

...................

[mysqld]

...................

datadir = /mydata3/data

...................

[root@node2 mysql]#

[root@node2 ~]# service mysqld restart #错误是与上一个节点一样的错,所以同样的处理方法

MySQL server PID file could not be found! [失败]

Starting MySQL...The server quit without updating PID file (/mydata3/data/node2.magedu.com.pid). [失败]

[root@node2 ~]#

[root@node2 ~]# tail /mydata3/data/node2.magedu.com.err

201223 17:17:16 [Note] Server socket created on IP: '0.0.0.0'.

201223 17:17:16 [ERROR] Can't start server: Bind on TCP/IP port: Address alrea in use

201223 17:17:16 [ERROR] Do you already have another mysqld server running on pt: 3306 ?

201223 17:17:16 [ERROR] Aborting

201223 17:17:16 InnoDB: Starting shutdown...

201223 17:17:17 InnoDB: Shutdown completed; log sequence number 1595675

201223 17:17:17 [Note] /usr/local/mysql/bin/mysqld: Shutdown complete

201223 17:17:17 mysqld_safe mysqld from pid file /mydata3/data/node2.magedu.copid ended

[root@node2 ~]#

[root@node2 ~]# netstat -tnlp | grep mysql

tcp 0 0 0.0.0.0:3306 0.0.0.0:* LI STEN 4519/mysqld

[root@node2 ~]# kill 4519

[root@node2 ~]#

[root@node2 ~]# service mysqld restart #启动成功了

MySQL server PID file could not be found! [失败]

Starting MySQL.. [确定]

[root@node2 ~]#

[root@node2 ~]# mysql

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 1

Server version: 5.5.28-log Source distribution

Copyright (c) 2000, 2011, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> show databases; #看到了mydb

+--------------------+

| Database |

+--------------------+

| information_schema |

| mydb |

| mysql |

| performance_schema |

| test |

+--------------------+

5 rows in set (0.02 sec)

mysql> \q

Bye

[root@node2 ~]# s

[root@node2 ~]# service mysqld stop #停掉服务

Shutting down MySQL. [确定]

[root@node2 ~]#

[root@node2 ~]# chkconfig --list mysqld

mysqld 0:关闭 1:关闭 2:启用 3:启用 4:启用 5:启用 6:关闭

[root@node2 ~]# chkconfig mysqld off #禁止开机自启动

[root@node2 ~]# crm status

============

Last updated: Wed Dec 30 10:05:08 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

2 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node2.magedu.com ]

Slaves: [ node1.magedu.com ]

mystore (ocf::heartbeat:Filesystem): Started node2.magedu.com

[root@node2 ~]#

配置集群资源一定要与主节点运行在一块

在第二个节点 192.168.0.55 上

[root@node2 ~]# crm

crm(live)# configure

crm(live)configure# primitive mysqld ocf:lsb:mysqld #配置一个主资源,mysqld是主资源的名称,ocf:lsb:mysqld是资源代理,,不用定义op吧

lrmadmin[18452]: 2020/12/30_10:11:20 ERROR: lrm_get_rsc_type_metadata(578): got a return code HA_FAIL from a reply message of rmetadata with function get_ret_from_msg.

ERROR: ocf:lsb:mysqld: could not parse meta-data:

ERROR: ocf:lsb:mysqld: no such resource agent

crm(live)configure#

crm(live)configure# primitive mysqld lsb:mysqld #就是lsb:mysqld的,不是 ocf:lsb:mysqld

crm(live)configure#

crm(live)configure# verify #暂时不是 commit提交吧

crm(live)configure#

#让mysqld与mystore在一起吧,因为 mystore 肯定与主节点在一起

#首先drbd的主角色切换完成,其实mystore挂载完成之后,mysqld才能启动

crm(live)configure# colocation mysqld_with_mystore inf: mysqld mystore #排列约束

crm(live)configure#

crm(live)configure# show xml

.................................

<constraints>

<rsc_order first="ms_mysqldrbd" first-action="promote" id="mystore_after_ms_mysqldrbd" score="INFINITY" then="mystore" then-action="start"/>

<rsc_colocation id="mystore_with_ms_mysqldrbd" rsc="mystore" score="INFINITY" with-rsc="ms_mysqldrbd" with-rsc-role="Master"/>

<rsc_colocation id="mysqld_with_mystore" rsc="mysqld" score="INFINITY" with-rsc="mystore"/> #表示 mysqld必须要与mystore在一起 这里with相当于 base的意思吧

</constraints>

.................................

crm(live)configure# order mysqld_after_mystore mandatory: mystore mysqld #次序约束,表示先启动mystore 再启动mysqld ,,,start就省略了

crm(live)configure#

crm(live)configure# verify

crm(live)configure# show xml

.................................

<constraints>

<rsc_order first="ms_mysqldrbd" first-action="promote" id="mystore_after_ms_mysqldrbd" score="INFINITY" then="mystore" then-action="start"/>

<rsc_colocation id="mystore_with_ms_mysqldrbd" rsc="mystore" score="INFINITY" with-rsc="ms_mysqldrbd" with-rsc-role="Master"/>

<rsc_colocation id="mysqld_with_mystore" rsc="mysqld" score="INFINITY" with-rsc="mystore"/>

<rsc_order first="mystore" id="mysqld_after_mystore" score="INFINITY" then="mysqld"/> #顺序是 先mystore ,然后 mysqld

</constraints>

.................................

crm(live)configure# commit #提交

crm(live)configure#

crm(live)configure# cd

crm(live)# status

============

Last updated: Wed Dec 30 10:28:12 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

3 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node2.magedu.com ] #drbd 主节点node2

Slaves: [ node1.magedu.com ]

mystore (ocf::heartbeat:Filesystem): Started node2.magedu.com #mystore 在node2上

mysqld (lsb:mysqld): Started node2.magedu.com # mysqld 在node2上

crm(live)#

在第二个节点 192.168.0.55 上 第二个窗口

[root@node2 ~]# /usr/local/mysql/bin/mysql #可以访问

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 2

Server version: 5.5.28-log Source distribution

Copyright (c) 2000, 2012, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql>

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mydb |

| mysql |

| performance_schema |

| test |

+--------------------+

5 rows in set (0.00 sec)

mysql>

mysql> drop database mydb;

Query OK, 0 rows affected (0.03 sec)

mysql> create database hellodb;

Query OK, 1 row affected (0.00 sec)

mysql>

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| hellodb |

| mysql |

| performance_schema |

| test |

+--------------------+

5 rows in set (0.00 sec)

mysql>

mysql> exit

Bye

[root@node2 ~]# crm node standby #角色切换

[root@node2 ~]# crm status #此时所有资源在node1上启动起来了

============

Last updated: Wed Dec 30 10:43:05 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

3 Resources configured.

============

Node node2.magedu.com: standby

Online: [ node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node1.magedu.com ]

Stopped: [ mysqldrbd:0 ]

mystore (ocf::heartbeat:Filesystem): Started node1.magedu.com

mysqld (lsb:mysqld): Started node1.magedu.com

[root@node2 ~]#

[root@node2 ~]# crm node online #重新上线

[root@node2 ~]# crm status #此时资源仍在node1

============

Last updated: Wed Dec 30 10:45:39 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

3 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node1.magedu.com ]

Slaves: [ node2.magedu.com ]

mystore (ocf::heartbeat:Filesystem): Started node1.magedu.com

mysqld (lsb:mysqld): Started node1.magedu.com

[root@node2 ~]#

在第一个节点 192.168.0.45 上

[root@node1 bin]# /usr/local/mysql/bin/mysql

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 3

Server version: 5.5.28-log Source distribution

Copyright (c) 2000, 2012, Oracle and/or its affiliates. All rights reserve

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statem

mysql> show databases; #为什么我这边没有看到 helloldb,,,而马哥看到了

+--------------------+

| Database |

+--------------------+

| information_schema |

| mydb |

| mysql |

| performance_schema |

| test |

+--------------------+

5 rows in set (0.02 sec)

mysql>

在第一个节点 192.168.0.45 上

[root@node1 ~]# drbd-overview #说明有问题啊 (最后在 #crm #configure #edit 也就是修改cib配置文件,里面删掉关于cli-standby-的那一行的内容及其它关于standby的内容 以及 关于last-lrm-refresh 的内容,,然后重新启动两个节点吧)(有些因为加载这些资源有点慢,所以要等一会儿)

0:mydrbd Unconfigured . . . .

[root@node1 ~]#

此时还没有vip,别人访问高可用集群的时候,是通过vip地址来访问的

在第一个节点 192.168.0.45 上

crm(live)configure# primitive myip ocf:heartbeat:IPaddr params ip=192.168.0.50 nic=eth0 cidr_netmask=255.255.255.0

######### myip资源名称,,,,, ocf:heartbeat:IPaddr 资源代理,,,,, params:ip,nic,cidr_netmask

crm(live)configure# verify #验证一下 ,这里先不要提交吧

crm(live)configure#

myip 应该跟主资源在一起 (谁前谁后的无所谓,,意思是order设不设无所谓)

crm(live)configure# colocation myip_with_ms_mysqldrbd inf: ms_mysqldrbd:Master myip

crm(live)configure#

crm(live)configure# verify

crm(live)configure# show xml

.....................................

<constraints>

<rsc_colocation id="mysqld_with_mystore" rsc="mysqld" score="INFINITY" with-rsc="mystore"/>

<rsc_colocation id="mystore_with_ms_mysqldrbd" rsc="mystore" score="INFINITY" with-rsc="ms_mysqldrbd" with-rsc-role="Master"/>

<rsc_colocation id="myip_with_ms_mysqldrbd" rsc="ms_mysqldrbd" rsc-role="Master" score="INFINITY" with-rsc="myip"/>

#意思是drdb主资源要与myip在一起,,,(虽然马哥说谁前谁后的无所谓,,,但是个人感觉,从逻辑上,总感觉 myip要与drdb主资源在一起,才说得通 )

<rsc_order first="ms_mysqldrbd" first-action="promote" id="mystore_after_ms_mysqldrbd" score="INFINITY" then="mystore" then-action="start"/>

<rsc_order first="mystore" id="mysqld_after_mystore" score="INFINITY" then="mysqld"/>

</constraints>

.....................................

crm(live)# status

============

Last updated: Wed Dec 30 14:36:16 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

4 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd #资源都在node1上

Masters: [ node1.magedu.com ]

Slaves: [ node2.magedu.com ]

mystore (ocf::heartbeat:Filesystem): Started node1.magedu.com

mysqld (lsb:mysqld): Started node1.magedu.com

myip (ocf::heartbeat:IPaddr): Started node1.magedu.com

crm(live)#

在第一个节点 192.168.0.45 上

mysql> grant all on *.* to root@'%' identified by 'aaaaaa';

Query OK, 0 rows affected (0.00 sec)

mysql> flush privileges;

Query OK, 0 rows affected (0.00 sec)

mysql>

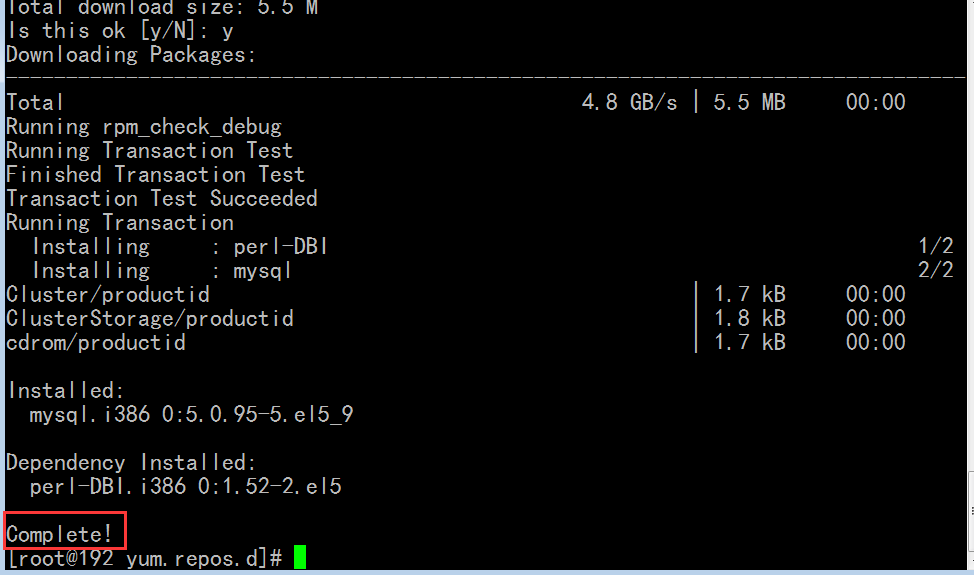

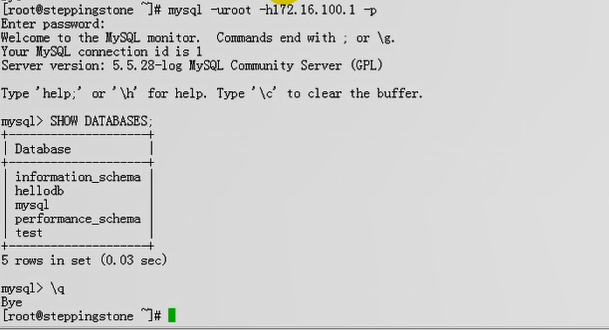

在另一台机器 192.168.0.75 上

[root@192 ~]# yum install mysql

[root@192 yum.repos.d]# mysql -uroot -h 192.168.0.50 -p

Enter password: aaaaaa

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 2

Server version: 5.5.28-log Source distribution

Copyright (c) 2000, 2011, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mydb |

| mydb1 |

| mysql |

| performance_schema |

| test |

+--------------------+

6 rows in set (0.03 sec)

mysql>

在第一个节点 192.168.0.45 上

[root@node1 ~]# crm status

============

Last updated: Wed Dec 30 14:51:30 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

4 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node1.magedu.com ]

Slaves: [ node2.magedu.com ]

mystore (ocf::heartbeat:Filesystem): Started node1.magedu.com

mysqld (lsb:mysqld): Started node1.magedu.com

myip (ocf::heartbeat:IPaddr): Started node1.magedu.com

[root@node1 ~]# crm node standby #转为备节点

[root@node1 ~]# crm status #现在主的变成node2了

============

Last updated: Wed Dec 30 14:52:35 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

4 Resources configured.

============

Node node1.magedu.com: standby

Online: [ node2.magedu.com ]

Master/Slave Set: ms_mysqldrbd

Masters: [ node2.magedu.com ]

Stopped: [ mysqldrbd:0 ]

mystore (ocf::heartbeat:Filesystem): Started node2.magedu.com

mysqld (lsb:mysqld): Started node2.magedu.com

myip (ocf::heartbeat:IPaddr): Started node2.magedu.com

[root@node1 ~]#

[root@node1 ~]# crm node online #重新上线

[root@node1 ~]# crm status

============

Last updated: Wed Dec 30 14:53:25 2020

Stack: openais

Current DC: node1.magedu.com - partition with quorum

Version: 1.0.12-unknown

2 Nodes configured, 2 expected votes

4 Resources configured.

============

Online: [ node2.magedu.com node1.magedu.com ] #此时node2还是主的

Master/Slave Set: ms_mysqldrbd

Masters: [ node2.magedu.com ]

Slaves: [ node1.magedu.com ]

mystore (ocf::heartbeat:Filesystem): Started node2.magedu.com

mysqld (lsb:mysqld): Started node2.magedu.com

myip (ocf::heartbeat:IPaddr): Started node2.magedu.com

[root@node1 ~]#

[root@node1 ~]#

在另一台机器 192.168.0.75 上

[root@192 yum.repos.d]# mysql -uroot -h 192.168.0.50 -p #我这边不能连接,但是马哥那边正常(前面红色部分的字有提示)

Enter password:

ERROR 1130 (00000): Host '192.168.0.75' is not allowed to connect to this MySQL server

[root@192 yum.repos.d]#

下图是马哥的

排错思路:资源状态出错以后,我们以后无非就是清理资源状态相关信息,清理节点相关信息

双主模型的drbd,讲到集群文件系统以后再说,主要是IPSAN的方式实现提供共享存储为主